NFV Deployment Tracker: Europe 2018 Update

Updated analysis of STL Partners’ NFV deployment tracker. What have Europe’s major telcos done, how are vendors faring, and who leads the pack?

Updated analysis of STL Partners’ NFV deployment tracker. What have Europe’s major telcos done, how are vendors faring, and who leads the pack?

Veon (rebranded VimpelCom) has embarked upon a bold strategy, shedding its network to move from being a traditional telco business to an agile consumer IP communications platform. We analyse its new strategy, its risks, and what it will need to do to succeed.

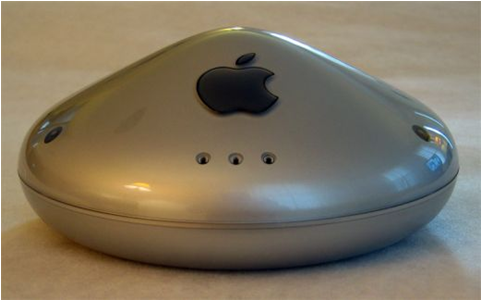

Apple is weakening Samsung Electronics’ grip in the high-end of the handset market, lowering the Korean company’s profitability and capacity to compete effectively. After a series of largely unsuccessful attempts to break into software and services, a daring option for Samsung is to seek a strong, strategic alliance with Google to enable both companies to mount a serious challenge to Apple’s dominance in the affluent demographic. Telcos could back such an alliance in return for a profitable role in the service layer. This report analyses the strategic rationale for such an approach.

Free’s shock bid for T-Mobile USA will stretch its finances and management capacity to the limit. Can Free’s package of tactics, technology, and procedures work in the US context?

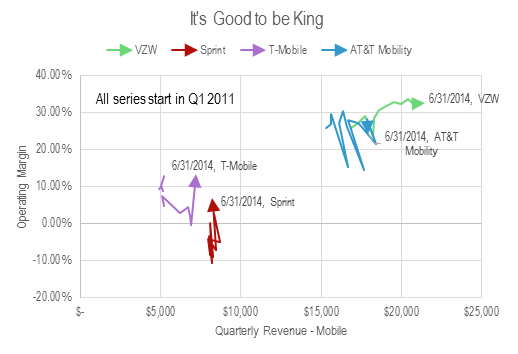

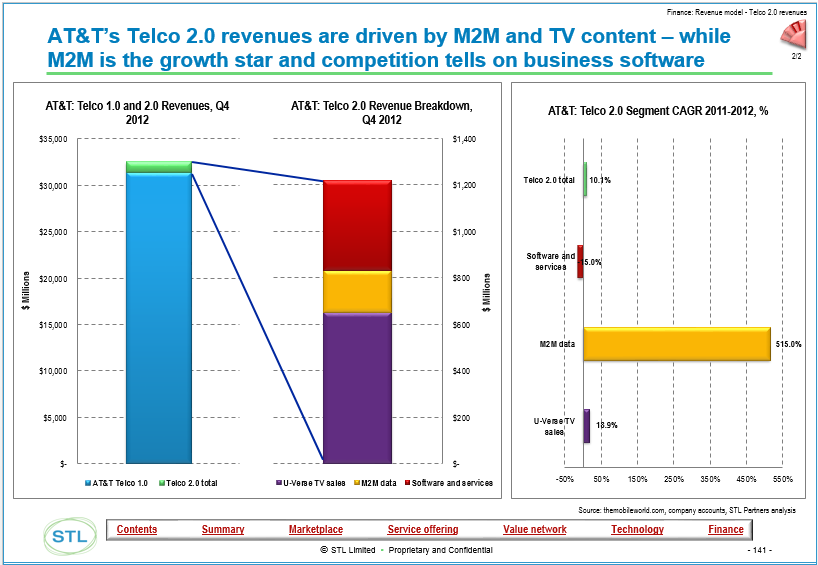

Verizon and Comcast have invested in high bandwidth fibre and cable networks, whereas AT&T has until recently focused on U-Verse, an IPTV play. Which strategy is winning out and why? The answer is surprising and may transform the US and other markets, and there are parallels with Apple and Samsung’s ‘deep value’ strategies of investing in assets that are hard to replicate.

Key trends, tactics, and technologies for mobile broadband networks and services that will influence mid-term revenue opportunities, cost structures and competitive threats. Includes consideration of LTE, network sharing, WiFi, next-gen IP (EPC), small cells, CDNs, policy control, business model enablers and more. (March 2012, Executive Briefing Service, Future of the Networks Stream).

Trends in European data usage

Regardless of business strategy, the development of ‘Smart Pipes’ – more intelligent networks – will be a key driver of shareholder returns from operators. Smarter networks will also benefit network users – upstream service providers and end users, and operators, and their vendors and partners, will need to compete to be the smartest. What are they, why are they needed, and what are the key strategies employed to develop them? (February 2012, Foundation 2.0, Future of the Networks Stream).

Facebook user saturation bubble chart

The telecoms industry often puts so-called OTT (over-the-top) players like Google and Facebook at the forefront of its concerns, as they pose new competition for services and applications. But what about encroachment of companies “underneath” the telcos, displacing them from their core asset, the network? Telco 2.0 examines the strategic threats and opportunities from wholesale providers, outsourcers and government-run broadband networks. (January 2012, Executive Briefing Service, Future of the Networks Stream).

UTF Image Jan 2012

An analysis of the status of LTE, the next generation of wireless network technology, including a round-up of early operator trials, and views on prospects for key vendors by Telco 2.0 partners Arete Research. (December 2011, Executive Briefing Service, Future of the Networks Stream).

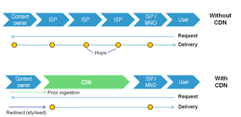

Content Delivery Networks (CDNs) are becoming familiar in the fixed broadband world as a means to improve the experience and reduce the costs of delivering bulky data like online video to end-users. Is there now a compelling need for their mobile equivalents, and if so, should operators partner with existing players or build / buy their own? (August 2011, Executive Briefing Service, Future of the Networks Stream).

Telco 2.0 Six Key Opportunity Types Chart July 2011

Innovation appears to be flourishing in mobile broadband. At the Telco 2.0 EMEA Executive Brainstorm earlier this month we saw working applications that enable a.) users to monitor and control their network usage and services and b.) operators to support ‘dynamic pricing’. Despite growing enthusiasm for LTE, delegates considered offloading traffic and network sharing strategies as at least as effective in managing costs. (May 2011, Executive Briefing Service, Future of the Networks Stream) Mobile Broadband Fuel Gauge

By building or acquiring Public WiFi networks for tens of $Ms, highly innovative fixed players in the UK are stealthily removing $Bns of value from 3G and 4G mobile spectrum as smartphone and other data devices become increasingly carrier agnostic. What are the lessons globally? (April 2011, Executive Briefing Service, Future of the Networks Stream).

‘Net Neutrality’ has gathered increasing momentum as a market issue, with AT&T, Verizon, major European telcos and Google and others all making their points in advance of the Ofcom, EC, and FCC consultation processes. This is Telco 2.0’s input, analysis and recommendations. (Sept 2010, Foundation 2.0, Executive Briefing Service, Future of the Networks Stream).

The Executive Summary and extract from a report on key issues for operators seeking to optimise mobile broadband network economics which were debated at the recent Telco 2.0 EMEA Brainstorm in London. (Special Briefing, May 2010, Executive Briefing Service, Future of the Networks Stream).

An introduction to the four strategy scenarios we see playing out in the market – ‘Telco 2.0 Player’, ‘Happy Piper’, ‘Device Specialist’, and ‘Government Department’ – part of a major new report looking at innovation in mobile and fixed broadband business models. (March 2010, Foundation 2.0, Executive Briefing Service, Future of the Networks Stream).

As part of our new ‘Broadband End-Games’ report, we’ve been defining in detail the opportunities for telcos to distribute 3rd party content and digital goods in new ways. (December 2009, Executive Briefing Service, Future of the Networks Stream).

Amazon’s stock continues to beat the bull market. Its success is based on a four level transformation to a ‘two-sided’ strategy. What are the lessons for would-be platform players in all parts of the Telecoms, Media, Technology sector? (November 2009, Executive Briefing Service, Dealing with Disruption Stream)