Liquid cooling: The next consolidation wave in data centre infrastructure

As AI workloads push rack densities higher and liquid cooling becomes essential infrastructure, a fragmented vendor landscape is ripe for consolidation.

As AI workloads push rack densities higher and liquid cooling becomes essential infrastructure, a fragmented vendor landscape is ripe for consolidation.

STL Partners’ 26 predictions for 2026, focusing on change and growth in the telco industry

This report assesses the impacts of delays in data centre construction on operational financial metrics, budget and internal rate of return, and examines the role of effective reporting in ensuring on-time delivery.

It was no surprise that AI dominated MWC this year. But there were lots of other notable trends on the rise – including cybersecurity and data centres – and some that were disappointingly overlooked. Find out what the STL Partners team saw and missed at MWC 2025.

Part two of this three-part report series examines the influence and impact of external forces that will shape telcos’ journeys as they adapt to survive and compete in the future.

All telcos know they need to change. We believe the defining characteristic of those that will grow most is a clear focus on where and how they can create value beyond connectivity. This report lays out the two viable paths forward, and six steps all telcos must take in this new version of the Telco 2.0 vision.

Facebook set up the Telecom Infra Project in 2016 to drive open source standards in core telecoms hardware and network operations. In this report we examine the implications of this project for telcos and other industry players, and recommend how they should respond.

MEC (Mobile / Multi-Access Edge Computing) puts compute resources at the edge of telco networks. These servers can be used for distributing internal network functions – typically linked with NFV deployments – or made available to third-party developers as part of an “edge cloud” service offering. What are the realistic use cases, and can telcos monetise them?

VoLTE solves the complex problem of providing voice services over a 4G mobile data network. Although it may allow 2G and 3G networks to be turned off, and their spectrum re-farmed to other networks, declining call revenues (and in some cases declining volumes) are dampening appetite to invest in VoLTE. However, with voice beyond telephony on the rise, for example through AI-powered voice assistants and video calling, can telcos use VoLTE as an opportunity to develop new IP-based voice and messaging communications offerings?

5G deployments will need new allocations of radio spectrum, particularly to achieve promised speeds, and target new IoT use-cases. However, the official process for releasing new frequencies is slow and cumbersome. Some countries may short-circuit the process. At the same time, the rationale for new sharing mechanisms, that allow industrial and vertical players to acquire spectrum for their own networks, outside of MNO control, is growing. What should telcos do?

Investment in fintech has increased by 500% in the last 3 years. Interest and investment has spiked as fintech companies seek to leverage new sources of data to develop disruptive offerings across a broad range of financial service areas (e.g. payments, lending & funding). The scale and scope of investment and activity represents a potential paradigm shift within financial services. Telcos, who have a long history developing financial services (e.g. mobile money), need to understand this changing landscape. This report explores why fintech is happening now and maps where and how it is disrupting established financial services.

There’s a lot at stake in 5G, and many players are understandably pushing their own views and strategies hard. Our latest analysis summarises the story so far, the barriers, players and timelines, and how we see it playing out.

Although the shape of the cloud industry turned out better than expected, most telco strategies in the cloud haven’t delivered. We investigate why, what has led to success, and what telcos need to learn to do differently.

Free has won market share and customer plaudits alike with its disruptive and original strategy in the French telecoms market. Its parent company Iliad has now developed an ingenious strategy for cloud. Our latest report shows how, and highlights lessons for all operators with ambitions to be more than a ‘pipe’.

This report will help digital commerce players assess some tough technology and strategy choices in the on-going mobile marketing and commerce battle. E.g. Will bricks and mortar merchants embrace NFC or Bluetooth Low Energy (BLE) or cloud-based solutions? If NFC does take off, will SIM cards or trusted execution environments be used to secure services? Should digital commerce brokers use SMS, in-app notifications or IP-based messaging services to interact with consumers? What are the big players backing, and what will be the key indicators that a specific technology is likely to win?

150 senior execs from Vodafone, Telefonica, Etisalat, Ooredoo (formerly Qtel), Axiata and Singtel supported our technology survey for the Telco 2.0 Transformation Index. This analysis of the results includes findings on prioritisation, alignment, accountability, speed of change, skills, partners, projects and approaches to transformation. It shows that there are common issues around urgency, accountability and skills, and interesting differences in priorities and overall approach to technology as an enabler of transformation. (November 2013, Executive Briefing Service, Transformation Stream.) Telco 2.0 Transformation Index Tech Survey Cover Small

Software Defined Networking is a technological approach to designing and managing networks that has the potential to increase operator agility, lower costs, and disrupt the vendor landscape. Its initial impact has been within leading-edge data centres, but it also has the potential to spread into many other network areas, including core public telecoms networks. This briefing analyses its potential benefits and use cases, outlines strategic scenarios and key action plans for telcos, summarises key vendor positions, and why it is so important for both the telco and vendor communities to adopt and exploit SDN capabilities now. (May 2013, Executive Briefing Service, Cloud & Enterprise ICT Stream, Future of the Networks Stream).

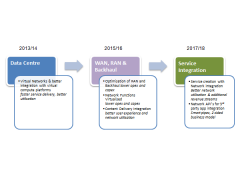

Potential Telco SDN/NFV Deployment Phases May 2013