Understanding the unconnected

Despite the growing maturity of the communications industry, why do more than half the world’s people still lack a dedicated Internet connection? And what can be done about it, by whom?

Despite the growing maturity of the communications industry, why do more than half the world’s people still lack a dedicated Internet connection? And what can be done about it, by whom?

Key trends, tactics, and technologies for mobile broadband networks and services that will influence mid-term revenue opportunities, cost structures and competitive threats. Includes consideration of LTE, network sharing, WiFi, next-gen IP (EPC), small cells, CDNs, policy control, business model enablers and more. (March 2012, Executive Briefing Service, Future of the Networks Stream).

Trends in European data usage

Innovation appears to be flourishing in mobile broadband. At the Telco 2.0 EMEA Executive Brainstorm earlier this month we saw working applications that enable a.) users to monitor and control their network usage and services and b.) operators to support ‘dynamic pricing’. Despite growing enthusiasm for LTE, delegates considered offloading traffic and network sharing strategies as at least as effective in managing costs. (May 2011, Executive Briefing Service, Future of the Networks Stream) Mobile Broadband Fuel Gauge

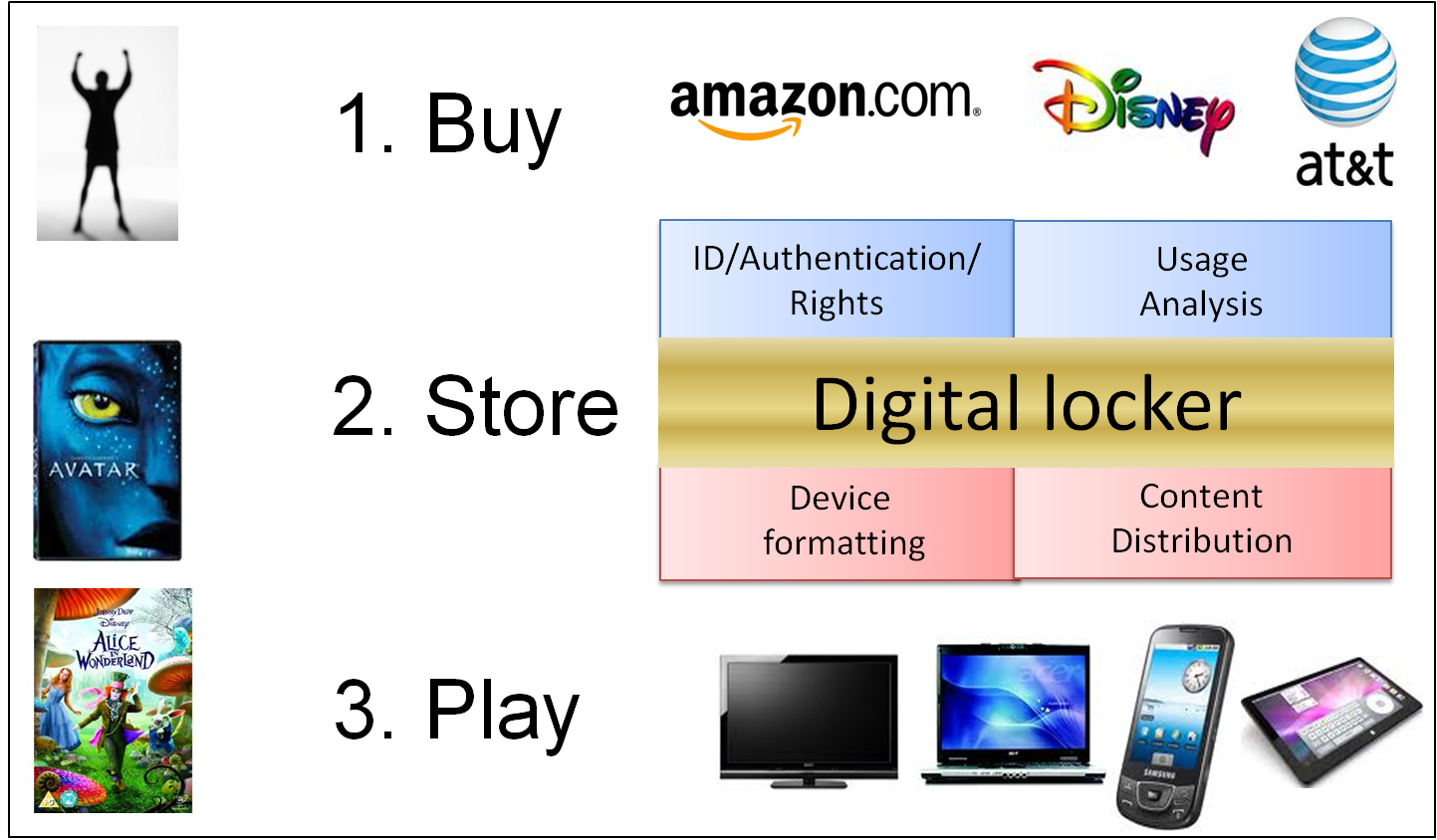

Telco assets and capabilities could be used much more to help Film, TV and Gaming companies optimize their beleaguered business model. An extract from our new 38 page Executive Briefing report examining the opportunities for ‘Hollywood’ and telcos. (Executive Briefing, August 2010)

Managing the role of new device categories in new and existing fixed and mobile business models is a key strategic challenge for operators. This report includes analysis of the practicalities and challenges of creating customised devices, best / worst practice, inserting ‘control points’ in open products, the role of ‘ODMs’, and reviews leading alternative approaches. (Executive Briefing, Aug 2010)