Telecom traffic: Heading for the fast lane?

Will artificial intelligence, immersive services, automated transportation and robotics drive a substantial increase in telecom traffic between now and 2032?

Will artificial intelligence, immersive services, automated transportation and robotics drive a substantial increase in telecom traffic between now and 2032?

The delivery of ‘mixed reality’ experiences through various forms of AR / VR ‘glasses’ is improving, and Apple may be planning to enter the fray alongside other heavyweight players such as Amazon and Google. We review the realistic timescales, and the opportunities for telcos.

This report explores how the cloud gaming market is likely to evolve and what this means for telcos. Beyond providing better connectivity through 5G and edge computing, there are several ways in which telcos can add value to the cloud gaming ecosystem.

VoLTE solves the complex problem of providing voice services over a 4G mobile data network. Although it may allow 2G and 3G networks to be turned off, and their spectrum re-farmed to other networks, declining call revenues (and in some cases declining volumes) are dampening appetite to invest in VoLTE. However, with voice beyond telephony on the rise, for example through AI-powered voice assistants and video calling, can telcos use VoLTE as an opportunity to develop new IP-based voice and messaging communications offerings?

The rapid growth of Facebook, WhatsApp, WeChat and other Internet-based services has prompted some commentators to write off telcos in the consumer communications market. But many mobile operators retain surprisingly large voice and messaging businesses and still have several strategic options. Indeed, there is much telcos can learn from the leading Internet players’ evolving communications propositions and their attempts to integrate them into broad commerce and content platforms. In this report we examine what opportunities still exist for telcos in this strategically important sector.

Netflix’s success in the US and in Western Europe has demonstrated that consumers are willing to change how they watch and pay for TV and movies. As a result Netflix’s OTT proposition is challenging traditional pay TV models and changing how new broadband services are looking at content. For some players Netflix is a threat and for others an opportunity. So, how should content owners, channels, pay platforms and broadband providers respond?

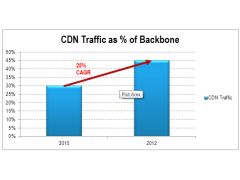

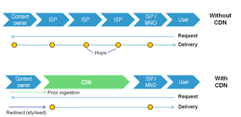

Changing consumer behaviours and the transition to 4G are likely to bring about a fresh surge of video traffic on many networks. Fortunately, mobile content delivery networks (CDNs), which should deliver both better customer experience and lower costs, are now potentially an option for carriers using a combination of technical advances and new strategic approaches to network design. This briefing examines why, how, and what operators should do, and includes lessons from Akamai, Level 3, Amazon, and Google. (May 2013, Executive Briefing Service).

CDN Traffic as Percentage of Backbone May 2013

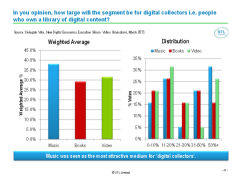

For mobile entertainment services to generate revenues commensurate to the attention they receive, the industry needs to improve ‘discovery’ tools, create more effective creative inventory, and deliver proof of its effectiveness. A summary of the Digital Entertainment 2.0 session of the 2013 Silicon Valley Brainstorm, held on the 20th March 2013, Intercontinental Hotel, San Francisco. (April 2013)

Digital Entertainment 2.0: What Gets Measured Gets Money

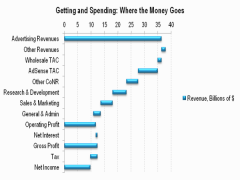

Google’s shares have made little headway recently despite its dominance in search and advertising, and it faces increasing regulatory threats in this area. It either needs to find new sources of value growth or start paying out dividends, like Microsoft, Apple (or indeed, a telco). Overall, this is resulting in something of a strategic identity crisis. A review of Google’s strategy and implications for Telcos. (March 2012, Executive Briefing Service, Dealing with Disruption Stream).

Google’s Advertising Revenues Cascade

An extract from our 284 page, 124 chart, strategy report that analyses the business models, markets, objectives, strategies and modus operandi of the major adjacent players, and their current and future impact on the telecoms industry. The report identifies the areas and options for competition and co-operation, and outlines potential strategies for interacting with each player. It also draws the combined activities of the digital empires – telcos, so called ‘OTT players’ and others – into the context of the new ‘Great Game’, the battle for power and value in the emerging digital economy. (Page updated February 2012, report published November 2011, Dealing with Disruption stream) Google Apple Facebook Microsoft Skype Amazon Telco 2.0 Disruptor Report Cover

Our in-depth look at the UK’s highly competitive digital TV market which reflects many global trends, such as competition between different types of content distributor (LoveFilm, YouTube, Virgin Media, BBC, BSkyB, BT, etc.), channel proliferation, new devices used for viewing, and the increasing prevalence of connected TVs. What are the key trends and who will be the winners and losers? (August 2011, Executive Briefing Service)

Chart from Connected TV Figure 2 telco 2.0

Content Delivery Networks (CDNs) are becoming familiar in the fixed broadband world as a means to improve the experience and reduce the costs of delivering bulky data like online video to end-users. Is there now a compelling need for their mobile equivalents, and if so, should operators partner with existing players or build / buy their own? (August 2011, Executive Briefing Service, Future of the Networks Stream).

Telco 2.0 Six Key Opportunity Types Chart July 2011

A write up and analysis of new research plus participants’ and speakers’ views at the May 2009 Telco 2.0 Executive Brainstorm exploring the challenges of the broadband video crisis. (May 2009, Executive Briefing Service, Future of the Networks Stream).

BSkyB’s platform shows a multi-sided business model in action, bringing value to both upstream and downstream customers and earning decent returns for shareholders.

Online Video demand patterns are becoming clearer but a working economic model is not. What’s next? (July 2008)

The impact of the launch of BBC’s online video service “iPlayer” on the UK DSL industry based on live network data. (Feb 2008)