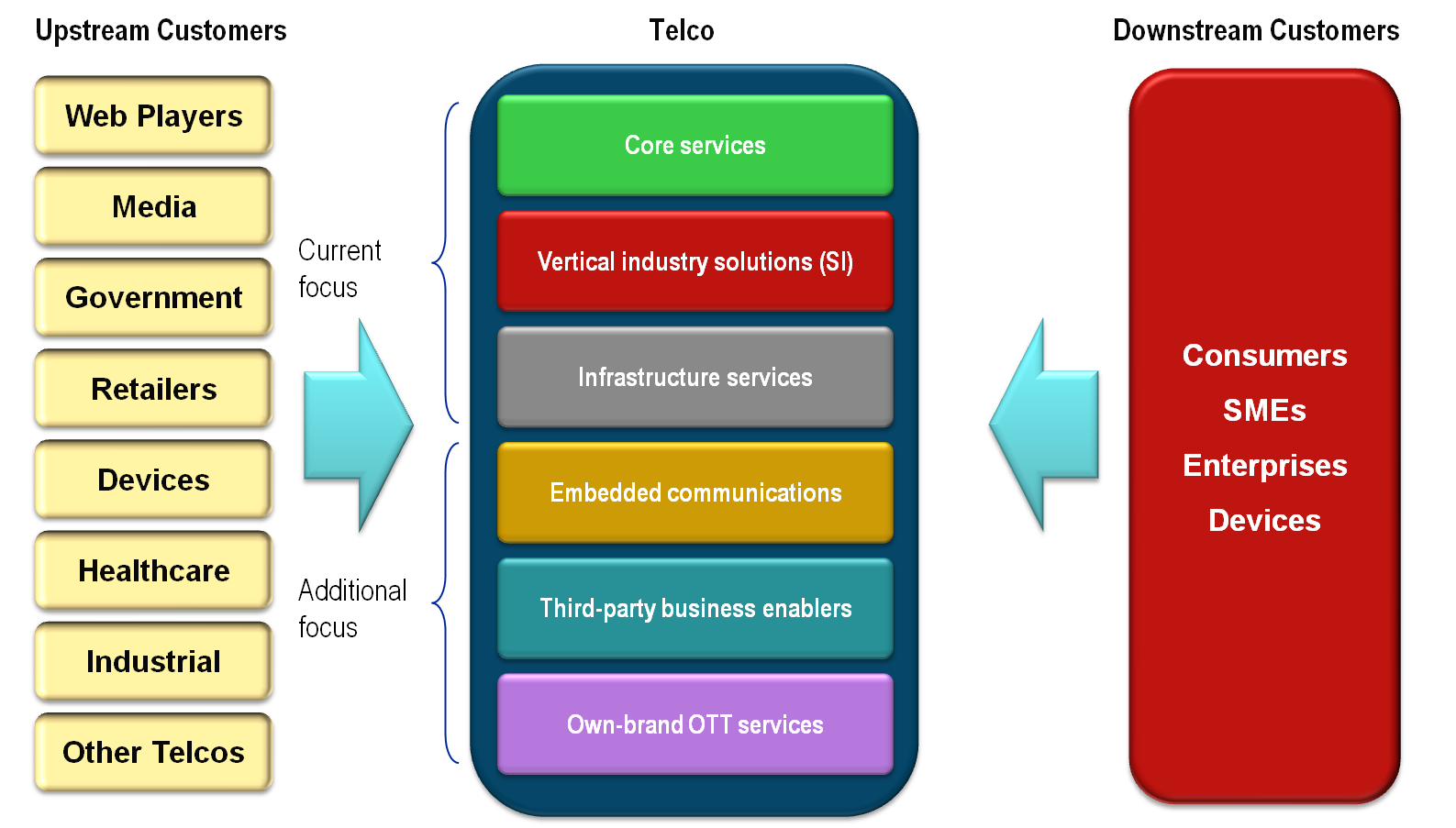

Software Defined Networking is a technological approach to designing and managing networks that has the potential to increase operator agility, lower costs, and disrupt the vendor landscape. Its initial impact has been within leading-edge data centres, but it also has the potential to spread into many other network areas, including core public telecoms networks. This briefing analyses its potential benefits and use cases, outlines strategic scenarios and key action plans for telcos, summarises key vendor positions, and why it is so important for both the telco and vendor communities to adopt and exploit SDN capabilities now. (May 2013, Executive Briefing Service, Cloud & Enterprise ICT Stream, Future of the Networks Stream).

Potential Telco SDN/NFV Deployment Phases May 2013